Key takeaways

- Voice cloning is not an audio feature, it is an operational media capability. Once content has to move through real production environments, multiple markets, approval loops, subtitle workflows, and legal review, the question shifts from generation quality to workflow design.

- The hard problem is no longer creating speech. It is managing that speech across languages, versions, rights, and distribution channels without losing control. Speech generation solves the creation step. It does not solve governance, versioning, or publishing.

- Efficiency gains are real in multilingual dubbing, recurring narration, incremental script updates, and dialogue-heavy interactive media. Continuity across markets becomes cheaper to maintain.

- Three risks are systematically underestimated. Solving generation but not control, treating localization as a language problem rather than a coordination problem, and ignoring governance (consent, usage rights, retention, disclosure) until it becomes a pressure point.

- The missing piece is rarely model quality. It is workflow design. Scaling multilingual media without control is not efficiency, it is hidden operational cost. A platform view on audio, subtitles, and metadata keeps the capability sustainable. alugha is built on that model.

Why voice cloning in content creation and media production is becoming an infrastructure decision

Voice cloning is a powerful technology. It can speed up localization, reduce production overhead, and make multilingual publishing far more efficient. I say that without any anti-AI reflex.

But that is also where many organizations stop thinking too early.

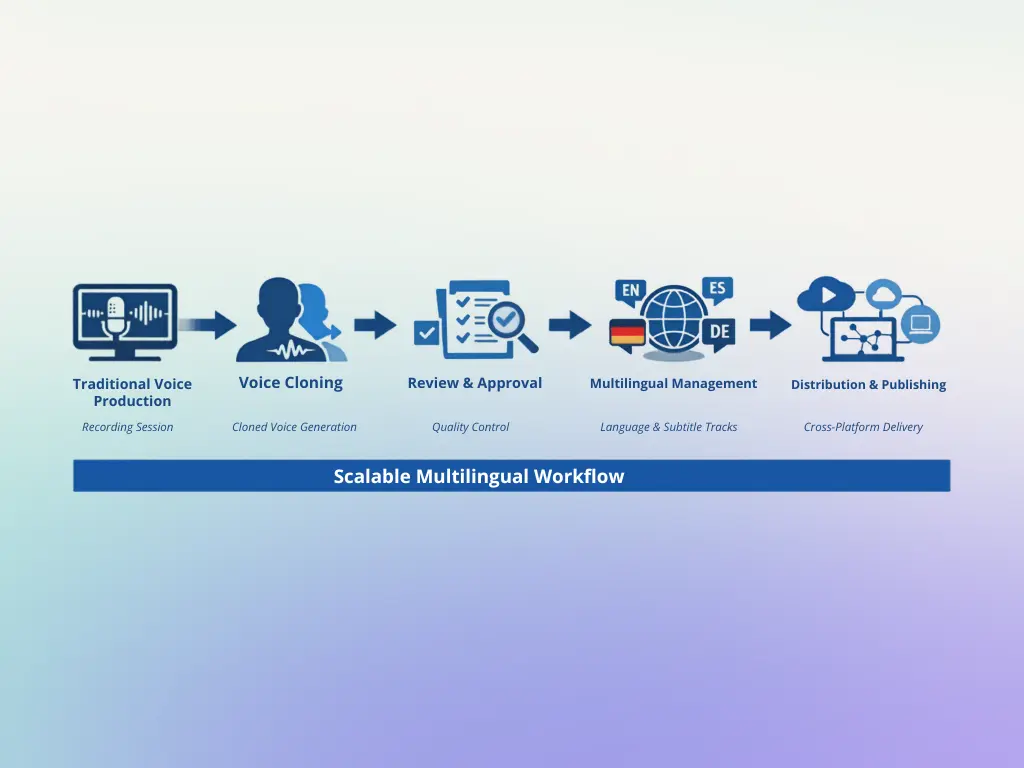

Most teams still treat voice cloning as an audio feature. It is not. At least not once content has to move through real production environments, multiple markets, approval loops, subtitle workflows, and legal review. At that point, voice cloning becomes something else entirely: an operational media capability.

That distinction matters. It matters for publishers. It matters for training teams. It matters for enterprises that produce onboarding videos, product explainers, webinars, podcasts, or game dialogue at scale. And it matters because the hardest problem is no longer generating speech. The harder problem is managing that speech across languages, versions, rights, and distribution channels without losing control.

I want to make three points. I want to explain where voice cloning creates real value. I want to show where many organizations underestimate the complexity. And I want to outline the questions decision-makers should now ask internally.

Voice cloning will not replace human creativity. But it is changing how modern media teams increase efficiency, maintain consistency, and extend global reach.

What voice cloning actually changes

The common belief is simple: voice cloning helps teams create audio faster. That is true. But it is only the first layer.

What voice cloning really changes is the production model around voice. Instead of treating every recording as a one-time event, organizations can begin to treat voice as a reusable media asset. That sounds efficient. In many cases, it is. But it also creates new dependencies.

A traditional workflow is linear. A speaker records. An editor cuts. A team reviews. The file is published. If something changes, the process starts again.

Voice cloning breaks that pattern. The voice can now be reused, adapted, translated, updated, and redistributed at a much larger scale. That is the advantage. It is also the new source of complexity.

This is why the technology is so attractive in content-heavy environments. Media teams are under pressure to publish more, localize more, and update more. At the same time, budgets are tight and timelines keep shrinking. Anyone running a multilingual content operation knows the problem: the content itself is often not the bottleneck. The bottleneck is what happens between revision and release.

Voice cloning reduces that pressure in several areas:

- multilingual dubbing and localization

- recurring narration across large content libraries

- version updates for existing media assets

That sounds obvious. It is. But the operational consequence is often missed: once voice can scale, the workflow around voice has to scale as well.

What voice cloning solves well

Let us start with the concession, because it matters. Voice cloning solves real problems.

It can make multilingual dubbing far more efficient. A team that would once have needed separate recording workflows for each market can now move faster and often at lower marginal cost. That is highly relevant for training libraries, product demos, webinars, educational content, and recurring branded media.

It can also improve continuity. Many organizations do not want five different generic voices across five markets. They want a stable presentation style. They want the content to still sound like the same brand, the same narrator, or the same character. Good voice cloning can support that much better than generic text-to-speech.

Narration is another clear use case. E-learning providers with catalogues of 200+ modules, documentary producers, internal communications teams, and audiobook publishers often rely on consistent voice identity over time. In traditional production, that consistency is expensive to maintain. Schedules change. Talent becomes unavailable. Re-recordings create friction. Voice cloning can reduce that problem.

It is particularly strong when content changes often. And most enterprise content does. Product names change. Features change. Compliance language changes. Interfaces change. Numbers become outdated. In a conventional workflow, even a small script change may require new studio time and additional post-production. That is inefficient. Voice cloning makes incremental revision much easier.

Gaming and interactive media add another dimension. Dialogue-heavy environments depend on continuity, scale, and flexibility. Placeholder lines, late script changes, multilingual rollouts, and character consistency all create production strain. Voice cloning can reduce part of that strain. Not all of it. But enough to matter.

Short version: the efficiency gains are real.

Better, but not enough

This is where the conversation usually becomes too optimistic.

The assumption is that once speech generation works, the core problem is solved. It is not.

Speech generation solves the creation problem. It does not automatically solve the governance problem, the versioning problem, the localization problem, or the publishing problem. In fact, it can intensify them.

That is the structural point many teams miss. Voice cloning does not simply replace one production step. It expands the scale at which voice assets can be created. And that means every downstream weakness becomes more visible.

A few audio files are manageable. A multilingual media operation is not.

Once organizations begin producing cloned voice outputs across multiple languages, several operational issues appear very quickly. First, versions multiply. Teams now have the source script, translated scripts, reviewed scripts, subtitle files, draft voice outputs, approved voice outputs, updated versions, and region-specific variants. That is not a creative problem. It is a systems problem.

Second, ownership becomes less clear. Who approves the cloned voice? Who confirms that tone, pronunciation, and legal wording are correct? Who decides when a revised track replaces a previous one? In many organizations, there is no clear answer.

Third, distribution fragments. Content may live on websites, in LMS platforms, inside support centers, on social channels, in apps, and in internal systems. If each language version becomes a separate asset, complexity grows rapidly. Maintenance becomes harder. Analytics fragment. Teams lose visibility.

That is why voice cloning should not be treated as a standalone AI feature. It is part of media infrastructure.

What is media infrastructure?

Media infrastructure is the operational layer that governs how video, audio, subtitles, metadata, permissions, and versions are managed over time. It is not just storage. It is the system that determines whether multilingual media remains controllable once scale increases.

That is the real issue.

The three risks companies underestimate

1. They solve generation, but not control

The first mistake is assuming faster generation equals better workflow. It does not.

A company may produce multilingual audio quickly and still end up with file sprawl, inconsistent naming, duplicate uploads, unclear approval states, and broken update logic. The result is not efficiency. It is hidden operational cost.

That matters especially in enterprise settings, where content is rarely static and rarely lives in one place.

2. They treat localization as a language problem

Localization is not only about language. It is about coordination.

A translated voice track still has to match the right subtitle version, the right release state, the right compliance wording, and the right distribution environment. If those layers are disconnected, quality drops even when the audio sounds good.

That is why “the voice sounds fine” is not a sufficient success metric.

3. They ignore governance until it is too late

Voice cloning raises practical governance questions immediately. Consent. Usage rights. Retention. Review. Disclosure. Access control. None of these are theoretical.

Who owns the voice model? Under what conditions can it be reused? How is AI-generated content labeled? Who has permission to trigger outputs? What happens when a voice should no longer be used?

Organizations that do not answer those questions early usually answer them later under pressure.

This is not a legal footnote

Voice cloning is not only a workflow issue. It is also a governance issue.

Consent is the first principle. No voice should be cloned commercially without explicit permission and clear rules around use, scope, and duration. That should be obvious. In practice, it is often handled too casually.

Human review is the second principle. Even very strong systems can produce awkward emphasis, wrong pronunciation, or a tone that feels slightly off. That may be acceptable in some internal contexts. It is often not acceptable in public-facing or brand-sensitive media.

Transparency is the third principle. Not every use case requires the same disclosure standard. But in many settings, stakeholders should understand when voice output is AI-assisted or AI-generated. Trust is easier to preserve than to rebuild.

This is not alarmism. It is operational maturity.

The three questions every media leader should ask now

The right question is not whether voice cloning may be used. The right question is where and under which conditions it becomes sustainable.

Three internal questions help clarify that quickly:

- Where are our multilingual voice assets actually managed today?

- Who owns approval for cloned voice outputs across language versions?

- Can we update one media asset across several markets without duplicating the entire publishing workflow?

If those questions cannot be answered clearly, the issue is not the AI model. The issue is the surrounding system.

Why this belongs beyond the creative team

I do not want to argue against voice cloning. Quite the opposite. Used well, it is a highly practical capability.

But it should not be treated as a creative side project alone. Once organizations depend on cloned voice across markets and channels, the topic moves beyond studio efficiency. It touches governance, publishing logic, asset control, and long-term maintainability.

That is why voice cloning increasingly belongs not just in production discussions, but in broader media operations and digital infrastructure discussions.

The good news is that the market has matured. Teams no longer have to choose between experimentation and control. The missing piece is usually not generation quality. It is workflow design.

That is where a platform like alugha becomes relevant. Not because it is “about AI,” but because it gives organizations a structured way to manage multilingual video, multiple audio tracks, and subtitles in one environment. That is a media infrastructure answer to a workflow problem.

Voice cloning is not just changing how speech is created. It is changing what scalable media operations require.

FAQ

What is voice cloning for content creation?

Voice cloning for content creation is the use of AI-generated voice models to produce narration, dubbing, and voiceover at scale, preserving a consistent speaker identity across languages, versions, and updates. Applied properly, it is an operational media capability, not a standalone audio feature. The value comes from the workflow around the voice (asset management, version control, subtitle alignment, approval, distribution), not from the speech generation step alone.

How does voice cloning change multilingual content workflows?

It turns a linear workflow (record, edit, review, publish, re-record when anything changes) into a reusable-asset model where a single voice model can feed localized tracks across markets, accept incremental script edits, and update existing assets without re-recording. That compresses production time and lowers marginal cost per additional language. It also shifts the bottleneck from creation to coordination.

What are the main risks of voice cloning at enterprise scale?

Three risks are systematically underestimated: (1) solving generation but not control, which produces file sprawl and unclear approval states, (2) treating localization as a language problem when it is a coordination problem across subtitle, release-state, and compliance layers, and (3) ignoring governance (consent, usage rights, retention, disclosure, access control) until an incident forces the conversation. Voice cloning does not create these risks, it amplifies them by expanding scale.

How does alugha approach voice cloning for content creation?

alugha treats voice cloning as part of media infrastructure, not as a standalone AI feature. The platform manages multilingual video, multiple audio tracks, subtitles, metadata, permissions, and versions in one environment, so voice cloning can scale without creating workflow debt. The platform runs on EU-only infrastructure, supports GDPR-compliant delivery, and ships DPA terms for enterprise procurement. Current plans and capabilities on alugha.com/plans.

This is a satellite article. For the full pillar, see Voice Cloning for Enterprises: Technology, Ethics & GDPR Compliance.